OpenAI, Anthropic and Google Unite Against China: The AI Distillation War and the Frontier Model Forum

Bloomberg reveals OpenAI, Anthropic and Google are sharing real-time detection data to block adversarial distillation from China. DeepSeek at the center.

They fight for the title of best AI model in the world. But against China, they stand together. A Bloomberg exclusive on April 6 reveals that OpenAI, Anthropic and Google are now sharing real-time detection data to block "adversarial distillation" — the technique that lets you copy years of R&D in a few weeks of API calls. It's the first operational cooperation between these three rivals. And DeepSeek is at the center.

Adversarial distillation: copying GPT-4 without paying for compute

AI distillation is a well-known, legitimate technique. It involves training a small model on the outputs of a large model. Every lab uses it. That's how you get fast, cheap models from frontier models.

Adversarial distillation is the offensive version. The principle: flood frontier APIs — GPT, Claude, Gemini — with millions of precisely crafted queries designed to extract the model's reasoning patterns. Collect the outputs. Use them as training data for a competing model. Result: copy two to three years of R&D and billions of dollars in compute in a few weeks.

A determined actor can generate millions of synthetic queries covering every domain: math, code, reasoning, cybersecurity. The outputs become a frontier-quality training dataset — without the frontier investment.

The textbook case: DeepSeek R1, January 2025. Near-GPT-4o performance at a fraction of the cost. The entire world asked the same question: how is this possible? The likely answer that no one wanted to say officially: massive distillation from US APIs. OpenAI had detected abnormal usage patterns on its APIs in late 2024. Sam Altman refused to publicly confirm — but didn't deny it either.

| Type | Definition | Legality | Actor |

|---|---|---|---|

| Legitimate distillation | Train a small model on large model outputs | ✅ Legal | All labs |

| Adversarial distillation | Flood APIs to extract reasoning patterns | ⚠️ Gray area / ToS violation | State actors / competitors |

| DeepSeek R1 case | GPT-4o-level performance at fraction of cost (Jan 2025) | ⚠️ Unproven suspicions | DeepSeek (China) |

The Frontier Model Forum goes operational

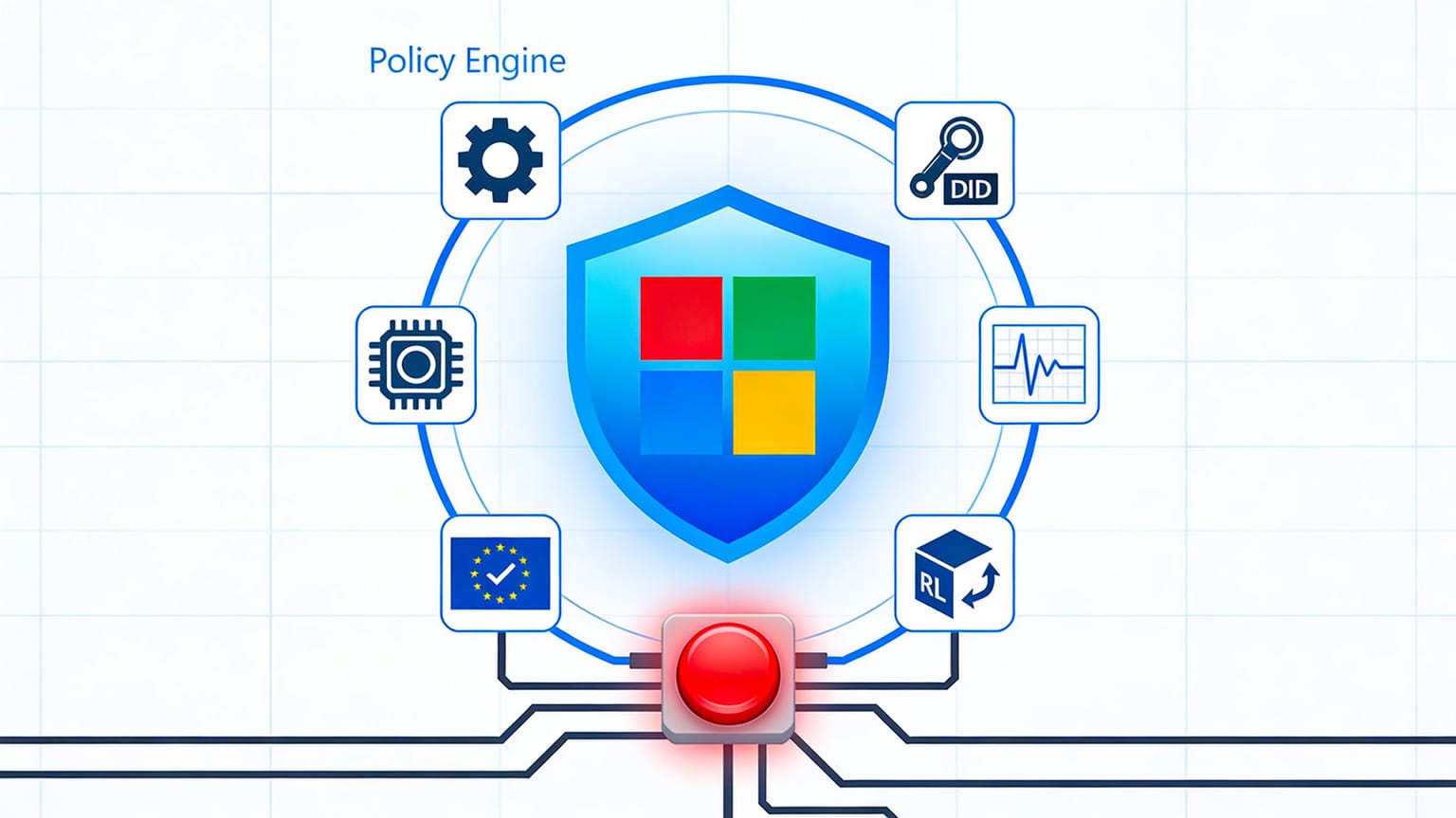

The Frontier Model Forum was created in 2023 by OpenAI, Anthropic, Google and Microsoft. Original goal: share best practices on AI safety. For two years, it operated as a discussion club. Principles. Statements. Not much concrete action.

The DeepSeek R1 shock changed everything. In early 2026, the Forum shifted to operational mode. New mission: real-time sharing of detection data. In practice: if Google detects an adversarial distillation pattern on the Gemini API, the detection data — attack fingerprints, actor identifiers, query signatures — is immediately shared with OpenAI and Anthropic so they can block the same actors on their own APIs.

It's exactly the same logic as banks sharing fraud patterns to block attackers. A threat intelligence system applied to AI models.

The report, confirmed by Japan Times, specifies what is shared — and what isn't.

| Category | Shared | Not shared |

|---|---|---|

| API security | ✅ Attack fingerprints | — |

| Threat intelligence | ✅ Suspect actor identifiers | — |

| Distillation detection | ✅ Query signatures | — |

| Models | — | ❌ Weights, architectures |

| R&D | — | ❌ Training data |

| Business | — | ❌ Commercial strategies |

Model weights are not shared. Technical architectures are not shared. Proprietary training data stays proprietary. The cooperation is strictly about detecting and blocking adversarial distillation attempts.

DeepSeek R2 incoming — the urgency of timing

DeepSeek R2 is expected in 2026. If the three labs haven't blocked distillation before its release, R2 will benefit from Spud (OpenAI), Mythos (Anthropic) and Gemini 2.5 Pro (Google) — the same way R1 benefited from GPT-4o.

This alliance isn't retrospective. It's preventive. It aims to protect the most powerful models in history before they even ship. Spud and Claude Mythos — the two most anticipated flagships of 2026 — are the most obvious targets.

| Member | Status | Protected models |

|---|---|---|

| OpenAI | Founder | GPT series, Spud |

| Anthropic | Founder | Claude series, Mythos |

| Founder | Gemini series | |

| Microsoft | Founder | Copilot / Azure AI |

| Mistral | Observer | Mistral series |

The timing is no coincidence. The Trump administration has deregulated AI domestically but maintains restrictions on AI exports to China — Export Control Act, Entity List. This alliance is consistent with US policy: technological competition with China remains a national priority regardless of which party is in power.

The paradox: rivals in the morning, allies at night

Let's put things in context. OpenAI, Anthropic and Google are locked in fierce competition. Race for flagship models. Race for datacenters and compute. Race for talent — OpenAI is doubling its headcount. Race for enterprise deals.

Monday morning: Gemini 2.5 Pro overtakes Claude Opus on LMArena — benchmark warfare. Monday evening: Google shares its detection data with Anthropic — security cooperation.

This isn't hypocrisy. It's strategy. The same logic exists in other industries. Pharmaceutical companies share clinical safety data while competing brutally on drugs. Banks share fraud patterns while fighting for customers. Some threats are too large to handle alone.

And copying billions of dollars in R&D through a few weeks of API calls: that's exactly this kind of threat. If adversarial distillation isn't blocked, any actor — state or private — can copy Spud, Mythos or Gemini without the corresponding investment. Sam Altman, Dario Amodei and Sundar Pichai have the same problem. They figured it out at the same time.

Is Europe in the loop?

The Frontier Model Forum has four founding members: OpenAI, Anthropic, Google, Microsoft. All American. Mistral (France) is an observer member. The strategic question for Europe is direct: if US APIs are protected against distillation but European models aren't — the European ecosystem becomes the attack vector.

DeepSeek can simply pivot to Mistral or European open-source models. That's the scenario nobody wants to discuss in Brussels.

And the AI Act creates an additional paradox. It requires European labs to be more transparent about their models — which, paradoxically, makes them more vulnerable to distillation. The more you document your models, the easier you make it for those who want to copy them.

On China's side, if this distillation shortcut is blocked, the gap widens again. Likely signal: Beijing will accelerate its own investments in compute and fundamental research. DeepSeek, Baidu, Alibaba DAMO — all racing to close the frontier gap — will have to find other paths. This issue will land on desks in Brussels and Washington in the coming weeks. The question is on the table: will Mistral be integrated into the threat intelligence sharing? Or does the cooperation stay exclusively American? The Newsom executive order on AI regulation in California shows that even in the US, the line between regulation and technological competition remains blurry.

Key takeaways

- OpenAI, Anthropic and Google are cooperating via the Frontier Model Forum to block "adversarial distillation" — Bloomberg exclusive, April 6

- Adversarial distillation: flooding frontier APIs with millions of queries to copy US model reasoning patterns and train competing models without the R&D investment

- DeepSeek cited as primary actor — DeepSeek R2 expected in 2026, hence the preventive urgency of this alliance

- Shared in real time: attack fingerprints, actor identifiers, query signatures — not model weights or architectures

- Open question: are Mistral and Europe in the loop, or do they become the bypass attack vector?

In 2023, the Frontier Model Forum was a club of good intentions. In 2026, it's an operational defense system. The DeepSeek R1 shock changed everything: it proved you could copy years of frontier R&D in weeks. With Spud and Mythos about to ship — the two most powerful models ever built — OpenAI, Anthropic and Google can't afford to fight on two fronts at once. They compete on models. They cooperate on the survival of the investment that makes those models possible. It might be the most rational thing the AI industry has done this year.