Trump Threatens Anthropic with Executive Order: 'Woke' AI Banned from Pentagon, OpenAI Gets the Contract

After calling Anthropic a national security risk, the Trump administration prepares an executive order to ban the startup from all federal agencies. OpenAI inherits the military contract. Anthropic fights back in court.

The standoff between the Trump administration and Anthropic has just crossed a critical threshold. After the Pentagon ban on Anthropic and the threat of a presidential executive order, Dario Amodei's startup took the fight to federal court between March 9 and 11, 2026. In the background: the most strategic AI military contract of the decade — scooped up by OpenAI one hour after its rival's eviction. The Trump-Anthropic conflict is redrawing the power dynamics between Washington and Silicon Valley over AI governance.

From Truth Social Ban to Executive Order: How the Conflict Escalated

Everything accelerated on February 27, 2026. The Department of Defense officially designated Anthropic as a "supply-chain risk". The same day, Donald Trump published an unambiguous message on Truth Social, ordering "EVERY federal agency" to "IMMEDIATELY CEASE" using Anthropic products. The president called the startup "woke" and "leftwing."

The terms of the ultimatum were clear: immediate enforcement for civilian agencies, six-month transition period for the DoD. Trump added he would use "the full power of the Presidency to ensure compliance, with significant civil and criminal consequences."

Two weeks later, between March 9 and 11, 2026, Anthropic went on the offensive and sued over the Pentagon ban in federal court. Meanwhile, the White House is preparing an executive order that would formalize the exclusion across the entire federal apparatus.

The Heart of the Disagreement: What Did Anthropic Refuse?

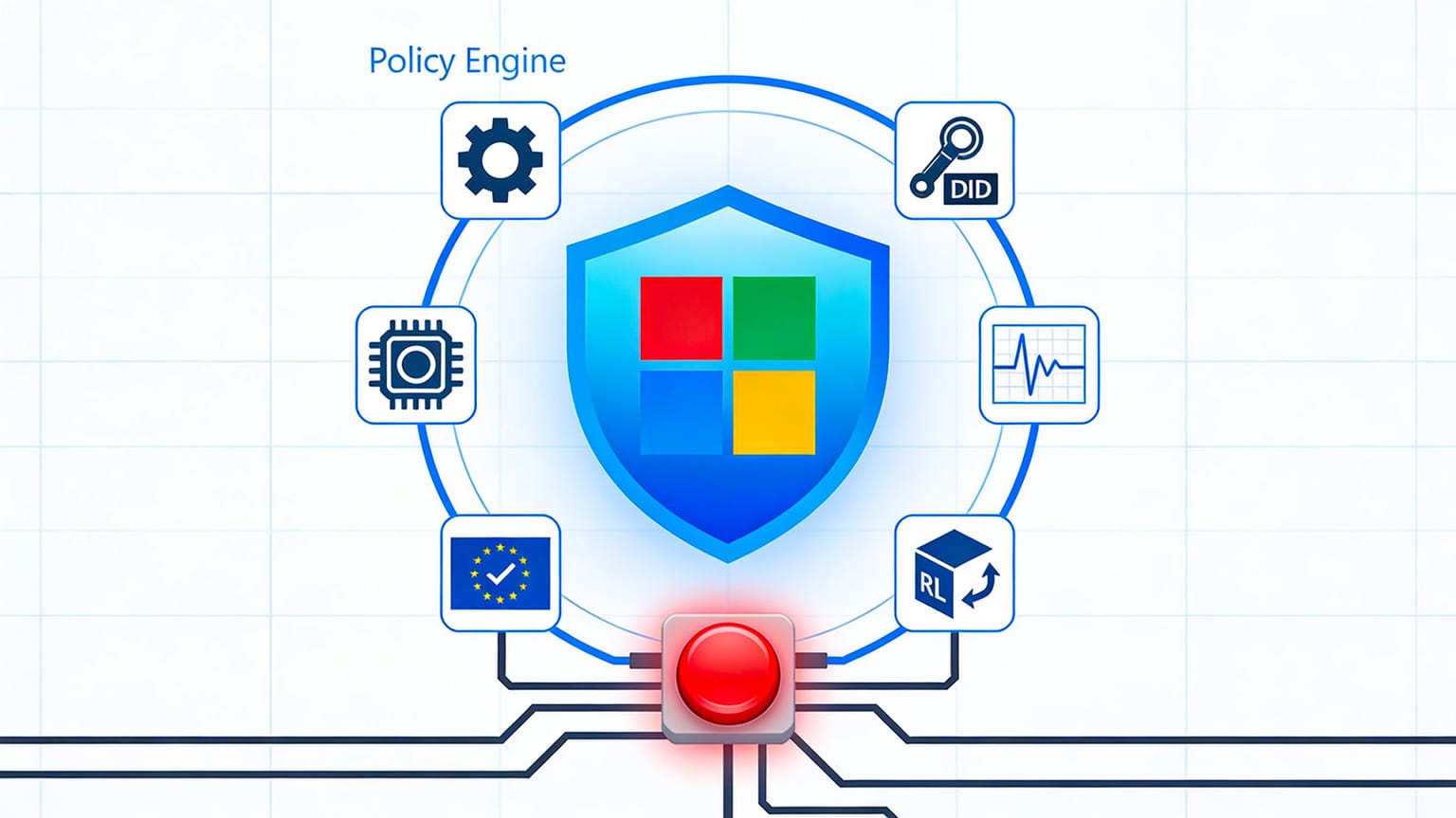

Behind the political rhetoric lies a deep technical and ethical disagreement. Claude 4, Anthropic's flagship model, is used by several US intelligence agencies and thousands of military subcontractors. The Pentagon wanted to expand this usage to sensitive operational cases: disabling the model's ethical guardrails, use for autonomous surveillance capabilities, and integration into systems potentially used for weapons.

Dario Amodei, Anthropic's CEO, refused. His position: lifting these restrictions would expose the technology to mass surveillance and autonomous weapons uses incompatible with the company's safety charter. A refusal that transformed a commercial partnership into a political crisis.

OpenAI in Ambush: The Big Winner of the Standoff

The timing speaks for itself. On February 27, one hour after Anthropic's designation as a security risk, OpenAI announced a deal with the Pentagon. Sam Altman, who had accepted the Defense Department's conditions earlier in 2026, stated his company would maintain its own safety guardrails — while meeting the DoD's operational needs.

Altman's strategy could be summed up in two words: commercial alignment. Where Anthropic draws a red line on offensive military use, OpenAI chooses managed cooperation. A bet that today gives it a near-monopoly on the generative AI federal market — and the billions of dollars in contracts that come with it.

The question this transfer raises: will OpenAI's promised guardrails withstand pressure from a client that just demonstrated it doesn't hesitate to evict those who say no?

The Stakes for Europe

The American conflict resonates directly across the Atlantic. If Washington can ban an AI provider overnight for political reasons, what guarantee do European governments that depend on these same models have?

France is particularly exposed. Government agencies and strategic companies use Anthropic's Claude or OpenAI's GPT in their workflows. The Trump-Anthropic precedent illustrates the risk of technological dependency on players subject to the whims of American domestic politics.

For Mistral AI, the French AI champion, this crisis represents an unprecedented selling point. Arthur Mensch's startup, which targets €1 billion in revenue in 2026, could capitalize on the demand for digital sovereignty — provided it can prove its models reach the operational level of American giants. Several sources close to the Ministry of Armed Forces indicate that exploratory discussions about using sovereign models have intensified in recent weeks.

Can Anthropic survive without the US federal government as a client? The startup, valued at over $60 billion, retains a solid commercial base in the private sector. But the signal sent to companies is formidable: working with Anthropic now means risking displeasure from the White House. The precedent this case sets goes beyond Anthropic. It raises the fundamental question of 2026: who decides the ethical limits of AI — the engineers who build it, or the governments who buy it?